I have some old, usually forgotten WordPress sites. It happens like clutter in your office. These old sites, that will never be updated, still generate requests for updates, they get spam, if you have something like Wordfence installed, you get reminders, and they are potential holes for hackers (if you need o be scared to take action, nothing like fear, eh?).

Still, I abhor just removing sites from the web, even if it is totally unlikely anyone will ever look for it, to me, it’s incumbent as a web content creator to not rip holes in the fabric.

After getting a few update nags from one of the old sites, I decided to do something- to convert them to standalone HTML, and remove the WordPress back end.

The first site I tried was one done for a few presentations in 2010 and 2011, maybe only 20 posts and 2 static pages. With some research, I experimented with the WP Static HTML Output plugin, billed as:

Produces a static HTML version of your WordPress install and adjusts URL accordingly

I opted when running to create a Zip file to download. It took less than a minute to generate, and I started testing the files locally.

It creates a directory structure that should work (my clever WordPress setup used a permalink structure based on database ids):

Anything in wp-content/plugins or wp-content/themes are just the files the static versions need (usually CSS, images, maybe Javascript).

What I found was that it used a BASE HREF tag (which helps make all relative links work) but it was still requesting a lot of things like JS libraries and CSS from the full URLs. I decided to do a glob of global search and replaces to make them all relative links starting with “/” which means the root of the server (in hindsight, I think it would have worked fine). But I wanted to test.

I also did a search and replace to remove all search forms (which wont work). And I turned off comments on all posts so the form would not show up.

My files though would not work with this root structure, so I made a subdomain of the mains site, e.g. static.crustyoldsite.org to test. All was good, so I deleted the entire directory from the wordpress site (I kept a copy of wp-config.php just in case I might need to re-install).

And boom, The Secret Revolution is not just an HTML site, no WordPress at all — http://secretrevolution.us/

That’s one less thing to worry about. And one more thing sort of figured out.

I wondered though- it sure would be nice to have a shiny app button on Reclaim Hosting cpanel so people could reclaim a site from WordPress. Thinking it might be there, I posted a message in the new community area (UPDATE: This seems to be happening, Go Timmmmyboy Go!)

As usual both Jim and Tim responded quickly, and suggested the Site Sucker OSX app. There is the sound of me slapping my head, as I have that app.

I had another site to try, my old I Hate Running Site, which I have not posted to since 2011. Why do I need that sucking database cycles and being an update nag?

This time I was smarter- i did some prep ahead of time. The setup I had did not have a search thing in it, but if you had to remove it, you could take it off any widget sidebars/footers easily. A lot of times, it is hard wired into the header template of your theme (header.php). If I had to I would just direct edit the theme to take out the search form; it does not matter that you are hacking the theme since it will go away.

I also checked to see that comments were closed on all posts (luckily I had thought of that before).

UPDATE Aug 26, 2016: Following some great thoughts from Boris Mann in the Reclaim Hosting thread about meta data that is lost in my approach, I would add to the decommissioning WordPress list to do a blog export, so at least you have all of the posts and info as XML. There might be other options for doing some kind of meta data export as JSON.

And also, because the theme I used had a widget that spans the bottom of all pages (I could have used the footer widgets), I added a little note:

This is of course not necessary, as the site will work as a static site exactly as it did as a WordPress site. But this is how I roll (because that’s what run haters do).

This pre-work will save you any post edit headaches later.

So I gave the WP Static HTML Output plugin a try again, this time checking both options to put files on the server and generate a ZIP to download.

I clicked the button and watched the browser tab activity icon spin. I went to clean my dishes. When I returned– I saw nothing. The browser had stopped. I tried a second time, with just the download option.

No dice.

My guess is the script timed out- this blog has about 380 posts, a lot more.

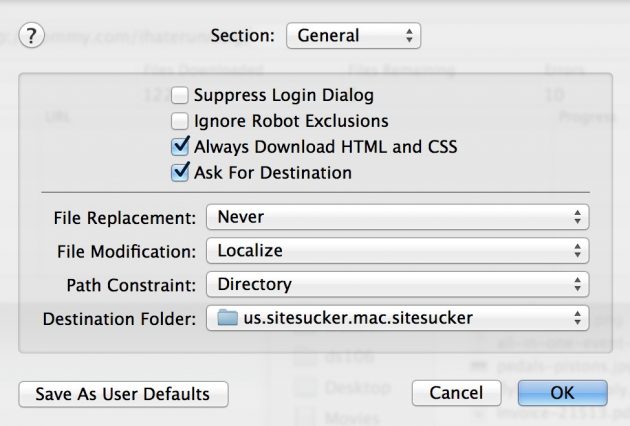

So I gave Site Sucker a try. It was a while since I used it, and made a bunch of guesses on the options, bumping the depth level of traversal, and the file size. The one mistake I did was the setting for Path Constraint I had it set to domain and noticed when it was hitting about 1000 files that it had traversed up the directory out of my WordPress install. This is the correct setting to keep Site Sicker from going outside the directory of the install:

But the final sucked site had over 1600 items (many are small image files) weighing in at under 30 Mb:

On inspection I was pleased to see it had converted all full URL links and image src tags to be relative links. I was also a bit smarter on upload, though 30 MB is not a huge ship, and I could have deleted the entire server director, and uploaded the new.

Instead, i attempted to skirt around uploading things un the media directory, since they are already on the server. So from the top, I deleted everything except wp-content (all selected in blue) — be sure to get .htaccess which is often hidden in ftp tools.

Then I went in wp-content, and deleted any *.php files, and the themes and plugins directories, leaving only medua files. I then uploaded the themes and plugins directories from site sucker, which again, are not the full directories, but just what the static site needs.

And shazam! I know have an archived I Hate Running site that has no WordPress running.

So it’s not a one click job, but still quite doable. And because the media is still there, as is the database, if I ever change my mind (totally unlikely I will ever run again), I could redo this back to a WordPress site.

I still love ya WordPress, but sometimes I don’t need ya.

Top / Featured Image: Modified from CC0 Licensed Pixabay Image

Awesome. There are other tricks that can be handy (say, if you don’t have admin privs on an old WordPress site anymore, or if it’s not running WordPress).

The UNIX command wget can snag local static copies of all files on a site (or just a directory of a site), including supporting .js and .css files.

https://darcynorman.net/2011/12/24/archiving-a-wordpress-website-with-wget/

There are other apps that can run locally – a Mac app called “Webgrabber” and a Windows app called “HTTrack” – I’ve used both with success.

The community forum thread on this led me to start playing with wget as well (I should have known you’d have a blog post on how to do something like this!). I’m especially intrigued by that approach because it could be done server-side so this could be scripted. Finding easier ways to archive work in Domain of One’s Own environments is a huge interest for a lot of folks and now I’m thinking about what a cPanel plugin could look like to offer an easy archival interface for your site using these methods.

Archiving is one thing, I wonder if there might be some workflow to use WordPress to generate a site, but that something that might sync/wget to a static site, perhaps published in Amazon cloud? Not sure what kind of site might make it worthwhile.

I love SiteSucker for archiving sites. Moving forward, I’m totally going to implement your tweaks (turning off comments on posts and path constraint). Thank you for sharing those tweaks! (And i’m archiving your post in my Evernote for future reference)

It did the job thoroughly; you might save some hiccups if you read the docs, there are a lot of settings. If I understand correctly it’s a gui front end to the UNIX wget command

I can only add to download the ImageOptim app on your Mac and just drag-n-drop the downloaded folder into it in order to further compress all the images.

Great suggestion, Dan. Leaner is better for static sites, every byte counts.

Hi Tim,

my name is Claudio and I’m the founder of HardyPress (www.hardypress.com),

We just built a platform where you can use your WordPress as usual, but then a staticized version of the site gets published for the rest of the world to see. Something like you explained in your blog post, but automated and hosted 🙂

We also seamlessly support Contact Form 7, and we take care of scraping your website’s pages and augment the search box to provide instant suggestions so, for most websites, everything will work just fine, even if the site is completely static.

You can archive your website, and still be able to make changes when needed.

If you want to give a try, I would be happy to hear some feedbacks from you.

Thanks Claudio, that’s a fabulous concept, and I may give it a spin soon.

Hi Tim, Claudio from HardyPress here 🙂

did you finally try the service? I would be super curious to know your impressions. You can also email me privately if you like

Hi Claudio,

Oops I forgot, will try it out soon, thanks