File this in the gut conjecture category, not grounded in anything beyond my failable non artificial questionable intelligence in my own grey matter (King, 1978).

Plus, I am sure it is better crafted elsewhere.

It’s no secret that my own practices and beliefs remain around this value of outloud outboard brain (tell-tale that this precious link lives solely in the WayBack Machine) approach of writing in this web site, on one of a few domains that’s been my home since 2003. Twenty years later I see many folks, including colleagues who took this path early, outsourcing their writing outputs to email newsletter platforms, medium et al, and strangely enough, LinkedIn (with its front of always seeking logins reeks to me of being this decade’s AOL). Even if Martin Weller asserts Blogs Are Back, Baby, I doubt there us that much sign of a resurgence, more people firing up their dusty space to write one post about it being dusty.

It’s too much effort, work to claim reclaim your stuff anymore, to own your own ideas. Just toss it in the cloud.

There was this period that for me ran from the early real MOOCs then DS106, that swelled for a while as an education approach of “Connected courses” where students locus of effort was not in a system or a course space, but their own. Things were readily connected by some voodoo acronym that these days is the internet equivalent of an waft of Bengay cream.

The other assertion that just confounds me is that what I maintain to my dying day is:

There is either an argument I hear from colleagues who once were on this train that the stake through the heart of Google Reader meant the end of using a feed reader. On a technical level, that never grokked for me. I took my exported list of feeds from Google Reader, and continued the same habit in NetNewsWire, Feedly, and for the last few years, Inoreader. In one space, I can sift scan, read deep or quick, the stories from the sources I have selected, not ones pitched me through some algorithmic churner.

I use it daily. The darn thing not only works, it works as efficiently and effectively as always.

The other assertion that gets my goat is (sorry Martin, I am just borrowing from your comment and expanded into a post, and at least you have never left dust):

it was a such a shame that Twitter effectively became everyone’s RSS and we stopped subscribing to blogs (mainly)

https://blog.edtechie.net/weblogs/blogs-are-back-baby/#comment-30780

Do not include me in that everyone, Yes, ever since the days of “what I ate for lunch” even in the darkening end of times musked 2023 era, I do regularly find key nuggets, links, people of interest there. But it by no means is efficient- I have to scroll and weed through a lot of palaber, and check my distraction level.

There is no way Twitter is an RSS replacement. Martin picked it up again in a follow-up BLOG (hah) post:

I loved RSS, it seemed like magic, you could just pull stuff in from different places, subscribe easily, aggregate feeds. Your blog reader was a little daily newspaper of quality content. It was the essence of what the web was made for.

I blame Twitter for killing this magic, increasingly people didn’t promote, or even know their RSS feeds, and social media became a much more effective way to distribute content. RSS often operated in the background still but you rarely saw the little RSS icon on people’s sites any more.

https://blog.edtechie.net/weblogs/the-newsletter-as-rss/

….

But with social media fragmenting and people either turning away from it completely or using it less, the absence of RSS awareness leaves us with a problem – how do we get all this lovely blog content out there? I know some hardcore people will still adhere to their blog readers, and I salute you. It’s possible that RSS will have a relabelling and a resurgence as I’m arguing blogging is experiencing. But until then, all that precious blog content is going unread.

To me this is conflating a tool for sensibly consuming ingesting content with ones meant for broadcasting outward. If the aim is mostly towards getting attention from your blog, that’s find for some folks. But that’s not why I am here (hence most of my posts are not widely read or commented); anything that happens from someone reading what I wrote is a bonus not a goal. Social media for me is always the secondary exhaust fumes of what I publish first in my own space.

For me I am not invested in pouring my content in a place (a) I cannot keyword search to find past content; (b) I cannot easily find what I was doing on a given day; or (c) I cannot freely decide to remove if I choose. This value only comes over the long haul but I can swiftly zero in one what I was working on/thinking about on any given date back 20 years… Say, May 17, 2018 I have something written or places I was via flickr.

That is what I value; I hardly expect others to feel the same.

You Said Something About a Theory

Indeed! I get distracted as I write. That means I would fail as a dutiful worker in the ChatGPT word factory.

Again, I don’t expect heaps of people to treat their time online like me. There are so many emoji buttons to click (uh oh, bias snuck out again). And why would I expect the approaches and ideas from 2012- the mid 2010s would continue? Stuff has to change.

I don’t think it can be blamed on Twitter/social media or another technical company bogeyman. It was this:

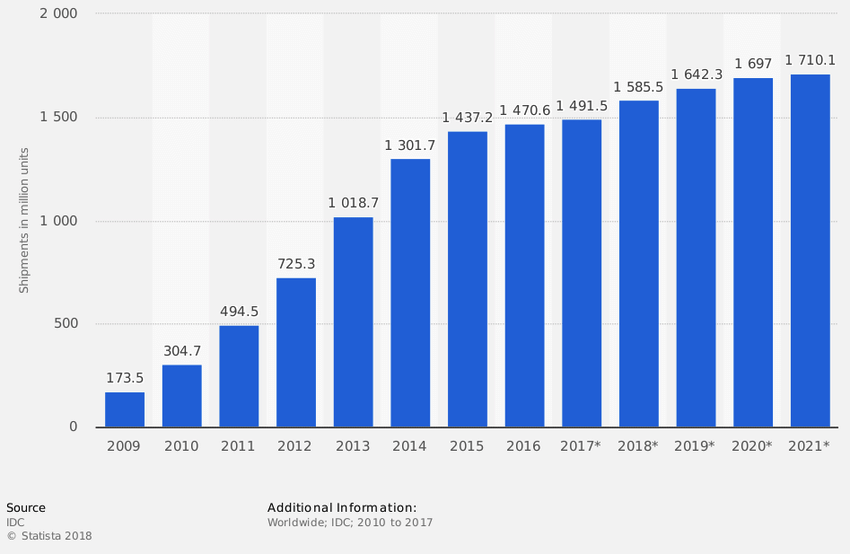

My theory it was the flip from time spent on larger computer/laptop screens to the small ones in our pocket. That photo was taken on a 2008 visit with university students in Japan. I had asked them about their use of computers at home for their school work, which baffled them. They had no other computing devices owned beyond the ones they put on the table for me.

I am just as much a part; I cannot imagine going anywhere now without the smartphone in my pocket. I do not even want to look at the stats that yield my time on the tiny screen.

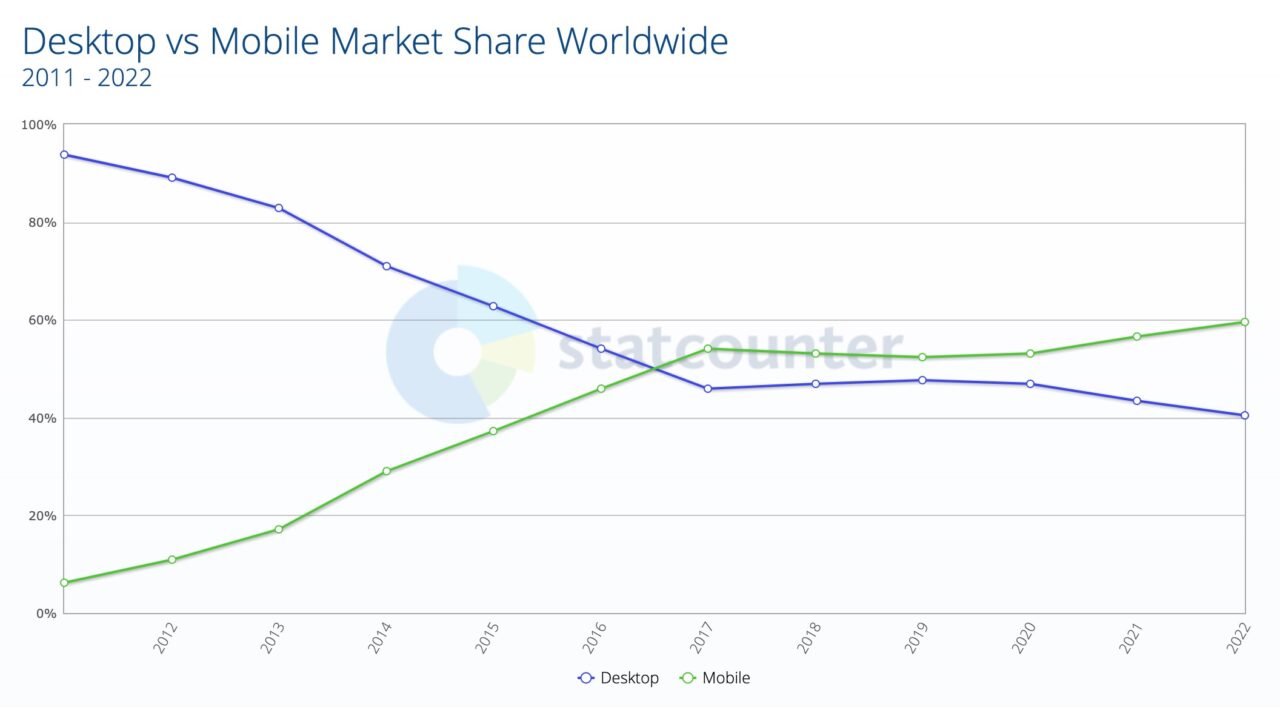

Look where the crossover happened:

Or just the five-fold increase over the same span in number of devices sent out in the world:

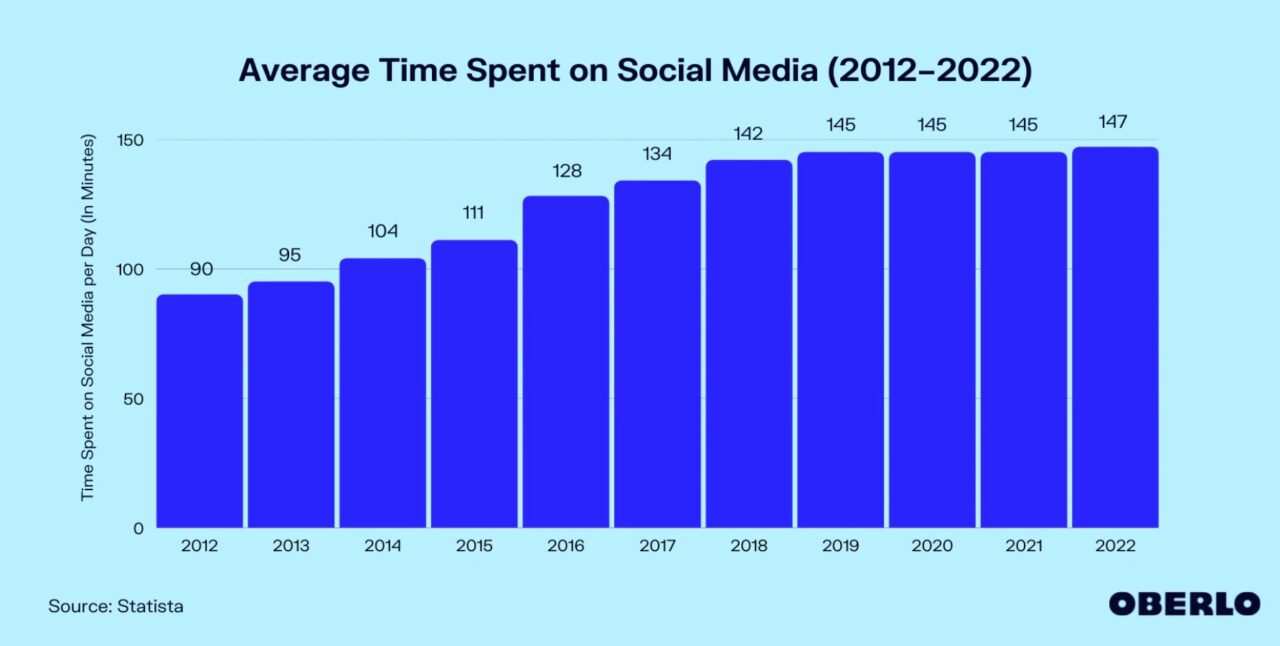

And looking at time spent in social media, this graph says what, an extra hour per day since 2012? Well, the sayings about statistics appy.

Maybe if I spent less time here, I could… well that’s maybe not valid either.

But time spent on that small screen is not really where I would be doing much writing or reading… right?

This is not an anti-phone rant, but just reaching for an understanding of a decade long shift from modes of writing/reading.

Please feel free to torch my theory or just ignore it. I have something else to write.

See you on the small screen.

Featured Image:

I have started, over the last two or three years -perhaps longer, to do a lot more drafting on the small screen simply because it is so convenient to dictate a draft wherever I am when an idea comes to me, than to sit down at a keyboard later and try to remember what I was thinking.

Also, I found your blog post on Twitter. Yeah, I’m pretty sure that means I’m too lazy to figure out RSS anymore. But thanks to the small screen, I did find you here and was able to create this reply in a time and place I won’t mention.

There’s also the keyboard / writing surface. Phones and tablets are better for consumption than creation… And the “session time”: a phone is a very snackable device (swipr, swipe, look up, do something else, swipe, do something else); a laptop/desktop takes a bit more effort and session time is likely to be longer… so spending 20 mins hacking on a blog post fits into that context.

(I’m commenting from a laptop; if I were on a phone, I’d send you a comment on Twitter…)

I’ve found I’m putting more stuff into github, and collating thematic material in an unbook form… github.com/psychemedia Lots of the repos are synched on my laptop, but if my laptop dies, I have the notes stashed on Github… Plus if I migrate to a new machine, I can selectively pull down different thematic collections.

Habits develop over long time periods. I find myself tethered to them, just as I am tethered to the network cable attached to this laptop.

Using a laptop with WiFi while wandering around the house doesn’t seem practical. Sitting down to type in different chairs seems silly.

The “keyboard” on my mobile is, to be generous, tiny, anti-typist, designed for finger or thumb tips less than half the size of mine. There is no way I’d have tried to write this comment if limited to that process.

My personal web site design looks okay on a laptop, but is a chore to use on a cellphone. I’m not sure it gets enough traffic to be worth a massive redesign.

Am I up to learning new habits at 76?

Will I someday fall in love with the cellphone screen?

Will voice-to-text replace touch typing?

(By the way, I came across your post via your fediverse post.)

So I read this post on my phone. I find the phone is OK for reading, less so for writing. So I use my phone time to read my feeds find and save links to Pocket (though any bookmarking tool would do), then later, I come back to them, pick out the best, and write about them.

I still use NetNewswire, on my more modern OS’s. Cloud syncing is the shiznit