cc licensed flickr photo shared by mastrobiggo

Technically, it was OMPL, but who cares what the ML it is– I’ve got a tip to share on how I’ve been using one single file to drive two dynamic resources. This is hardly in the realm of Tony Hirst mashup magic, but here it goes…

Since we are running several Horizon Projects a year, we’ve been setting up wikis in our hosted Wikispaces account, starting with a master wiki template, but customizing them as needed. Just in 2010 we’ve run one for the main Horizon Report, the K-12 Horizon Project, Iberoamerican Horizon Project (all in Spanish, that was fun), and just around the corner, the Museum Horizon Project.

Each one has a series of resources, and I’ve found what I think is a clever way to power two different ones from that double downing XML file. Here’s how.

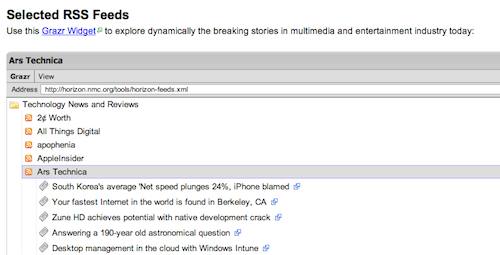

The first way I use it is for a simple feed reader, using a widget from grazr; I like the widget because it is simple, and opens feeds from a series of folders. See this one from the Horizon Report wiki:

Each feed is listed, and opens to reveal headlines, and one more click unfolds the content. Sure, its easier to read feeds in a reader, but here in one page, we have about 50 references constantly being refreshed. It’s all fed from an OPML/XML file- this one at http://horizon.nmc.org/tools/horizon-feeds.xml which can be copied from the widget if you want the whole feed pack.

On the other project wikis, we add a second folder/outline set of feeds specific to that report.

The beauty here is to update the widget, I simply edit the XML file.

I generate this in Google Reader; we have a specific research account set up, and make a label set in that account for each project. For a big set, I just export the whole thing (and parse out the sections I need, edit the title tags in the XML and its off to go.

And if grazr ever went down the ghost site path, I’d probably just generate the feeds from Google Reader’s widgets.

That’s the easier trick.

I wrote before about the power of creating a Custom Google Search engine (CSE) — using google’s search tools as a tool to just search the sites you select. It seemed sensible ot me to have a Horizon project resource that searched a set of sites we selected.. and HEY! We already selected a bunch of sites for the feed display above (foreshadowing..)

In typical mode, you have to add all the sites you want to include when you set up a CSE, but that gets to be tedious and repetitive. I was pretty sure I could use the same XML file as above, but the documentation is mildly dense on this point for the casual user- fortunately, I talked to my good buddy Scott Leslie who had done something to generate a CSE from an RSS feed of sites he tagged in delicious (I think that;s what he did).

Scott gave me enough pointers to get the method down.

- Create a new CSE

- The basics section is just all the descriptive stuff.

- You then go to the Sites section- typically, here is where you’d enter the URLs of sites you want to eb part of the search, but of course, we are hoping to avoid that. However, I found I could not get to the next important parts without entering one URL- I just chose one of the ones in my feed list to add as a temporary step (when we are done, you can remove this one). You can test the SE now, it should just search that single site.

- The magic happens on the “Advanced” section. What you will do is create an “Annotations” file which tells the CSE which sites to use. The first thing to note is note the label ID under the Upload Annotations header

e.g. look for the sentence and grab the bolded label

Label sites with _cse_xxxxxxxxxxxx if you want to include them in your Custom Search Engine. - Now the magic part- use the MakeAnnotations “Tool” (Not sure where the tool is, I just saw the formula needed to make the right URL). What you are doing is simply contructing a URL that generates the data CSE needs For the Horizon one it is it looks something like:

http://www.google.com/cse/tools/makeannotations?url=horizon.nmc.org%2Ftools%2Fhorizon-feeds.xml&label=_cse_xxxxxxxx&pattern=path

where for the parameter url=xxxxxx; xxxx is the encoded version of the URL (sans the “http://” for the OMPL feed. For the Horizon report, it is http://horizon.nmc.org/tools/horizon-feeds.xml so we need to replace all of the “/” with “%2F”. The next one, label= is simply the id label we grabbed in the previous step. The past parameter pattern= controls whether the search engine focuses on the base URL of the site for pattern=path (like http://www.mycoolsite.com/cool/blog/) it keeps the search there; if you want to have CSE use the entire domain, use pattern=path

- Copy that whole URL, and to make sure it is set, go to the Developer Console, past it in, and click refresh. I think this does some re-indexing or other magic voodoo. I;ve never found the test search to work from here.

- The last step is to go back to the Advanced section, and paste that same URL in the Annotations field and click Add.

That should be it. If you test your CSE, it should now be hitting results from the sources you listed. If it works, you can delete the site you manually pasted in the Sites box. The last thing is to Get Code, copy the embed code, and add it to your web site — for Wikispaces, its just the gunk you paste in an HTML widget.

The result is a Google CSE Search that limits its scope to just the sources in your Feed reader file, example– http://horizon.wiki.nmc.org/Google+Custom+Search.

And when you update your XML file for new feeds; it should also now include those sources in your CSE.

Once sorted out, this has been easy to replicate. The time consuming task is finding feeds (amazing that some sites still lack them or make it hard to find).

But that’s how I’ve put one XML file to work doing two different tasks. I bet YMWV (your mileage will vary), but let me know if I goofed a step.

For me, It’s been a good toofer

cc licensed flickr photo shared by A30_Tsitika