In several conversations I hear my own brain echoes of responses to AI mania of both fatigue and hype wariness but also some curiosity and itches to explore.

My own reckoning I reckon is the comparisons to the disruptive force of the early web was we were not clubbed over the head with the web in the early 1990s. There was almost an invitation to jump in the web, not a blaring of YOU WILL BE REDUNDANT LUDDITE IF YOPU FAIL TO LINE UP AND MARCH.

So here we are.

It’s not even realistic to talk about “it” as a singular thing, nor just proclaiming how bad / good / innovative / destructive force “it” is as a whole. Don’t we have sufficient thought pieces and newsletter gunk? I guess not.

That’s why I appreciate so much the thoughtful writing on (always) of Jon Udell in his series of posts on LLMs, who is not talking generally from some podium, but profoundly sharing what he is doing in actual real work using the thing. Line that up to with the Middlebury College Digital Detox on Demystifying AI which offers hands on doing type activities.

I’m rather bored with the bashing away at AI prompt boxes, which is why its refreshing to go back to Hugging Face to dip into more edgy stuff (where I found a worthy playground last May).

My curiosity was at least raised from a coma with a mentions somewhere of the MyShell OpenVoice set up to play with at Hugging Face:

… a versatile instant voice cloning approach that requires only a short audio clip from the reference speaker to replicate their voice and generate speech in multiple languages. OpenVoice enables granular control over voice styles, including emotion, accent, rhythm, pauses, and intonation, in addition to replicating the tone color of the reference speaker. OpenVoice also achieves zero-shot cross-lingual voice cloning for languages not included in the massive-speaker training set.

https://huggingface.co/spaces/myshell-ai/OpenVoice

Well maybe that’s not so clear. There’s a bit more at the MyShell project page for OpenVoice. If I can do my un-artificial intelligence summary, OpenVoice uses a very short audio source clip (like 30 seconds) to turn any entered text into audio, but also with an ability to have it speak in different pseudo emotion styles AND supposedly it can also generate it in other languages. All of this apparently done in a smaller technical footprint.

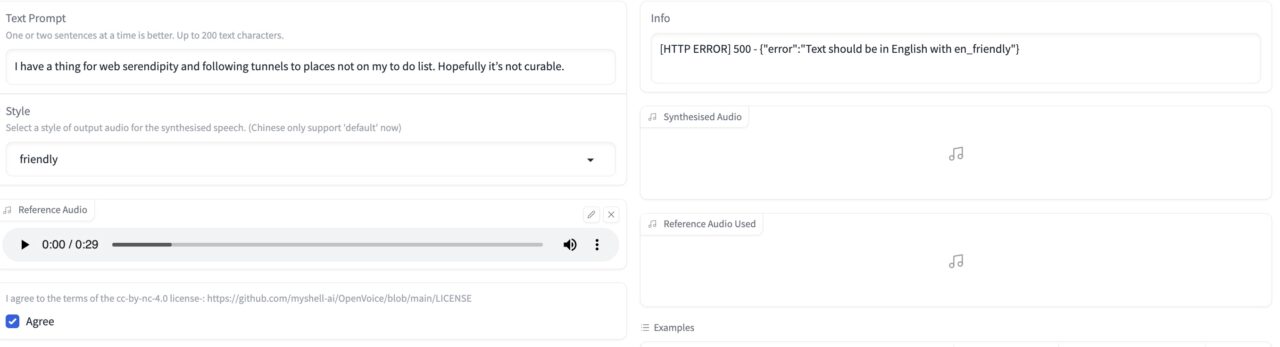

I actually read none of that, I just charged in. I tried the opening sentence from a recent blog post

I have a thing for web serendipity and following tunnels to places not on my to do list. Hopefully it’s not curable.

I plopped that in the box on the left, expecting to get a “friendly” emoted version….

…. but apparently the “intelligence” thought my writings where not in English. Well, that is some reassuring feedback on my style! I went back to the the blog pile once more for a different test phrase to generate some variants, using another opening line from What’s the Diff

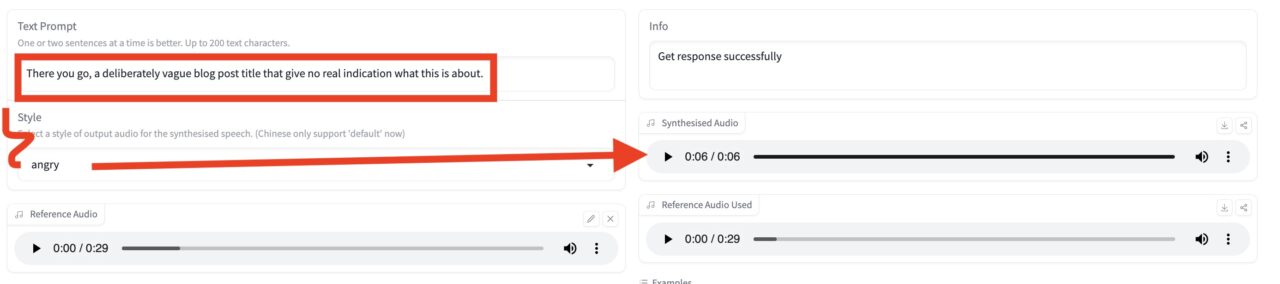

There you go, a deliberately vague blog post title that give no real indication what this is about.

I start with anger!

Here are some variants

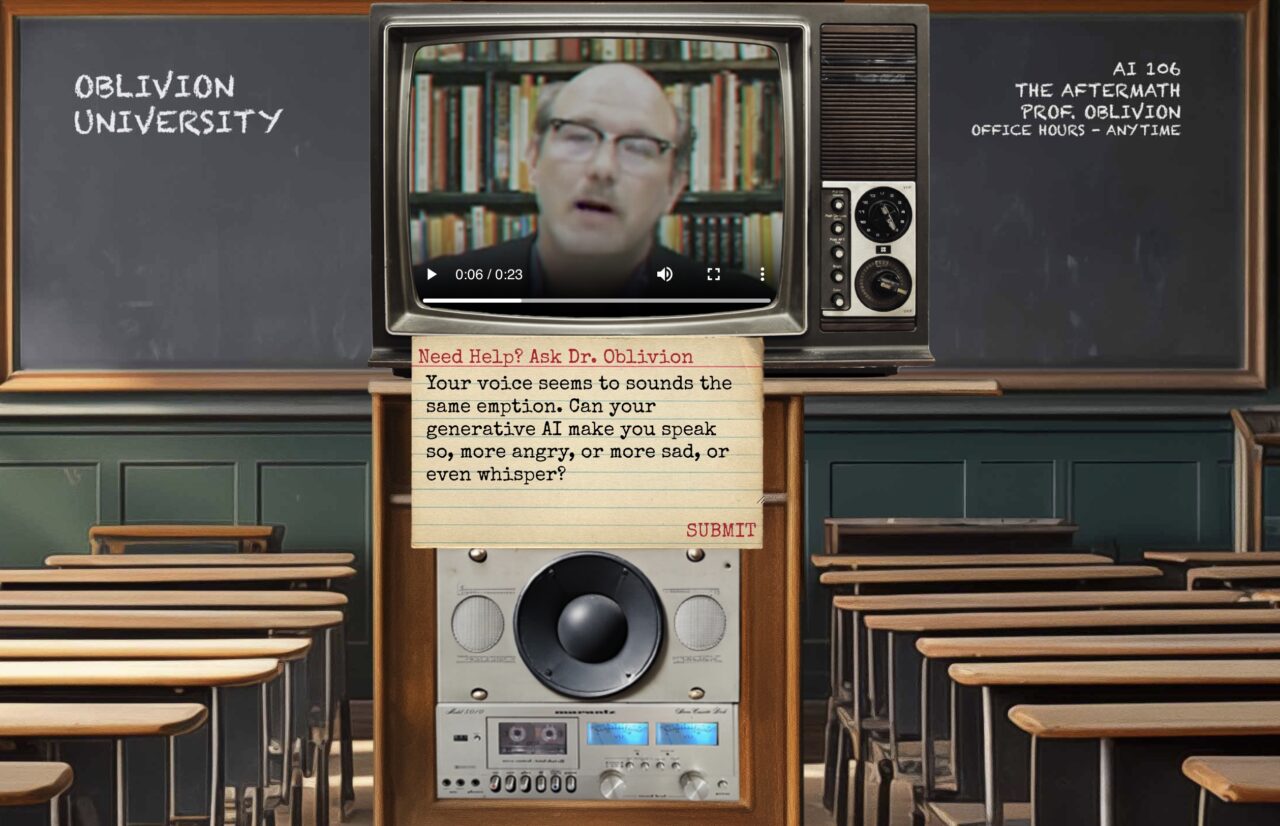

This seems intriguing, as I recall from Michael Branson-Smith describing his amazing work turning Dr Oblivion into an AI spewing audio bot, how he. had little control over the phrasing, that somehow the AI he used interjected those pauses that make it seem more real.

Why not ask the brilliant Dr. O?

Your voice seems to sounds the same emption. Can your generative AI make you speak so, more angry, or more sad, or even whisper?

To which he more or less asks, “Can you pick up what I’m generating down?”

Just because it’s easy and worthwhile to do with audio, I drop this dulcet wisdom into MacWhisper to get a transcript. Yes, in his smug superior tone, he swats me down:

How fascinating that you’re curious about the emotional range of my generative AI.

While it does possess the capability to emulate different emotions in speech, that’s not the primary function I choose to focus on.

My goal with artificial intelligence is to explore the ways it can enhance our understanding of media, technology, and their impact on society.

So keep your emotions in check and let’s dive deep into the depths of artificial intelligence.

Dr. Oblivion responds to my question (I would not call it an answer)

I don’t know what I’ve really accomplished here, but I’ve played, and it was fun. But when you play with OpenVoice, it’s impressive what it can do of its really only training on that little bit of source audio. But then again, while I have played and poked, I have understanding what it is doing.

And this is where my botheredness rises up, and says…. “end this damned post”!

Featured Image: 2023/365/63 Infinite Clones flickr photo by cogdogblog shared into the public domain using Creative Commons Public Domain Dedication (CC0)

off now to explore …

The Dr. Oblivion one was surprisingly solid! Hugging Face is an endless playground of weird options.

I had mixed success with OpenVoice but I was trying with some pretty exaggerated voices (Queen Elizabeth II, Mr. T, etc.). I started to try to run it on a server somewhere but ran out of time. The Discord server for it was also a bit too Anime-girlfriend-oriented for my taste.

I got a free account on https://lovo.ai/ and used Genny to make a voice of QEII and it was pretty good.

What I don’t think I’m conveying to the Detox audience is that this stuff has moved so fast and the fact that we can do crazy stuff like this for free right now is amazing (sure, scary too). When they say certain tools would have been impressive 10 or 15 years ago, they really mean a year or two- 5 tops. The time scale for how long ago something would have been amazing is really out of alignment.

You’ve gone way deeper than I. That’s a key observation of underestimating the speed and complexity of what has become available, to me a product of not only the lack of transparency of the tools (which is an easy critical card to play), but also I wonder if our wee brains can truly have a mental model for information, even “thoughts” rendered in 200 dimensional vectorspace (inside joke for you).

My other wonder is what it means if getting high end results easily necessarily leads to more creative expression, capability. Much of the charm and learning for me in the early DS106 days was doing things crudely, amateurishly. If we can “easily” pop out professional grade images and audio (and eventually video) does it mean we will be able to more creative works, or just an approximation thereof.

For Dr. AI Oblivion I have been back and forthing with Michael Branson-Smith, he is actively pursing having Dr. O generated as video. I respect the goal to make realistic video. But I think it works fantastically as audio. The beauty of good audio is our minds fill in the spaces that make him see alive. I lost track of where I heard it, but I recall Ira Glass describing why the This American Life story about the gun shootings at Harper High School were more impactful as radio/audio versus trying to show in video.

Thanks for all you have hatched for the Digital Detox.

I think you’re right about that.

The weird thing is that the human (art?) part of tech often seems to come from having to struggle with it or appreciating/creating the odd artifacts. I have some rambling stuff in my head about progressive moves away from the body/art connection and then the distance created by various technologies . . . something starting with painting/drawing maybe, then digital art/photography, and then to scanning and programmatic stuff, and finally to AI prompts. There are weird diversions along those paths.

It’s almost like the better the tech is at doing what you want without requiring skill, the lower the artistic aspects and yet, also ending up with what you want as an artist is what matters (I think, maybe) so is the complexity/difficulty in getting there a desired barrier? a flawed process? the way we justify it as art? I’m not sure how much all this stuff gets wrapped up in the same snobby stuff that results in exclusionary language in domain expertise land.

Never a great sign that I have though enough when I have so many slashes.

Thank you for playing along with Detox and for continuing to set the blogging bar. Somebody has to do it!