There you go, a deliberately vague blog post title that give no real indication what this is about. Heck, at this point, 1.5 sentences in, I am questioning myself if I know.

Note: I found this post hanging out in my Drafts. Was it left undone? Did I change my mind on publishing? I don’t know, what’s the diff? Just clicking publish now to clean house.

Like other colleagues who still keep this way of public writing alive (hail bava!) I have been wheel spinning with latching on to the Artificial Intelligence excitement wave. I was somewhat interested a year or more ago when the early image generators (e.g. Craiyon) came on the scene. It’s one thing to type text in a ChatGPT box and get paragraphs of text back, but getting a generated image back is still, if I sit back and pause, a bit surreal just from free form plopping words into a chatbox– i am getting something for almost no effort.

Ignoring yes, all the well known problems, impacts, dangers with goes inside the magic machines, I am finding it interesting to think that our interface for doing this is as simple, and familiar as a chat text box.

That’s maybe where our relationship with the same mode of communication we use countless times per day to message friends, family, colleagues, is intertwined with feeling a whiff of presence that we are doing more than interacting with programmed logic.

Typing text in a box, seeing and analyzing what I get in return, and integrating it into what I am trying to make or build is hardly new. I had read those stories of ChatGPT generating working WordPress plugins, javascript powered web code.

I had tried a few of these myself, and like the suggestion that it “hallucinates” (which it does not really do, it’s just wrong). I must not be a good prompt maker, because I always got code that looked right, but never worked. There was one case I am not remembering and cant find in my history, I was asking ChatGPT to write some kind of data query, that each time I tried it, game me empty results. “It” kept insisting that it was running the same query and getting valid results.I pushed i asking for proof, and finally, it said it was only able to test by verifying the syntax was correct, it was never really running the queries.

And then I realized I spent good chunk of time in this back and forth pushing for clarification or demonstration of not only saying, “this should work”, but proving it.

Some will be saying these are the literacies that users of these systems need to build, how to push and rework prompts to get desired results.

This feels not all that different from something we have used so often we take for granted. I am wondering….

I’ve learned through sheer iteration (okay and reading guides and blog posts) the tricks and methods for getting better results. I get back a whole raft of stuff and I have learned to develop my “spidey” sense (not really accurate much of the time) to sift the results. And while I conceptually understand that Google scrapes the web, creates some kind of index based on keywords and link frequency, but truly, the how this thing works is kept behind an iron wall (at least the company is not called “OpenSearch”).

I know this is really trivial nor insightful. Here is a super trivial experience.

Recently I was working on a spreadsheet with names, emails, of a list of people’s names, emails, I was assigning to do multiple things listed in more columns. All I was doing was marking the column for a name for the tasks I was assigning. I needed on a second sheet to list all the tasks and then to the right, which people were assigned.

Now the method is one that many will just know the function off the top of their head. I love doing spreadsheet formulas, but they come in bursts, and I forget often what I had done before. I’d done ones with V and H lookups, but that’s not the same. I reach for my usual textbox (web search) but my keyword mojo was not clicking. A lot of stuff is more ads for services then answers, it takes a lot of sifting through ads and promos to get an answer. I went down a few StackExchange holes, but did not find the same thing.

Just for exasperation, I tossed it into ChatGPT:

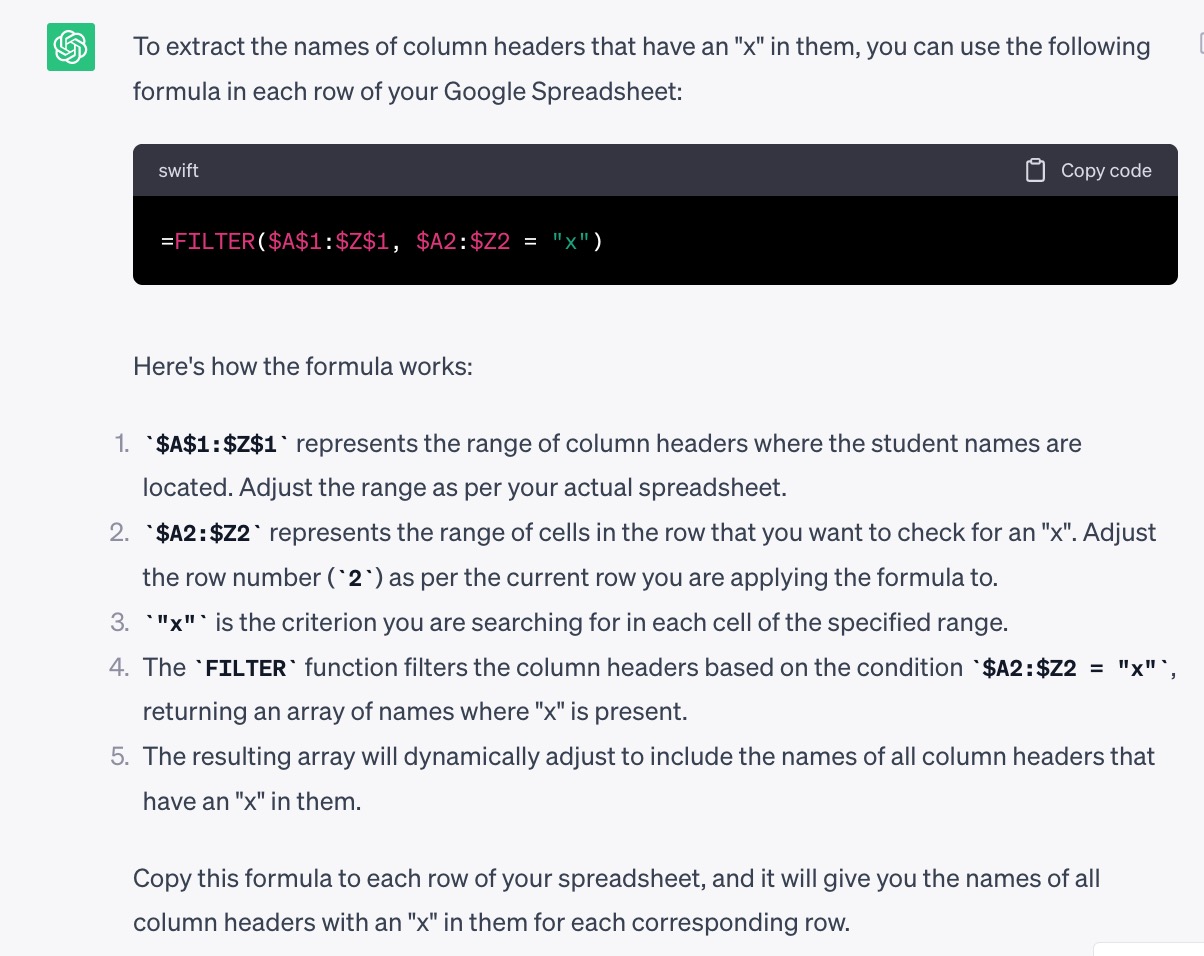

I have a Google spreadsheet with column headers of student’s names, like “Mary”, “Juan”, “Eleanor”, “Mabel” and rows representing 100 different papers I am assigning for them to review, where I have put an “x” in a cell if say I want Mary and Mabel to be a reviewer. Write a formula I could use for each row to find names of all column headers with an “x” in them.

ChatGPT link

Of course, now I know that he function I was seeking was FILTER (which most readers will be groaning, “doh! @cogdog is a knob”) but this is one of the few times I got a working bit on the first try.

I am not describing this for any kind of brilliant statement of what ChatGPT can do. But I think about my approaches used over the last decades of finding by web search: how to do tech things I maybe forgot how to do

- Type words in a box

- Press return

- Try to guess best result from results

- Wade through a lot of verbiage and ads and other stuff

- Find a bit of code to try

- Test it out

- If it does not work, go back to try a different result

Of course in suggesting there’s not much difference between these approaches I am glossing over the significant problems AI search presents.

Still, the ways I am doing these things feel the same.

Featured Image:

Hi Alan

I use the same method.

I’m amazed at the fear and loathing in the academic conversation around LLMs and what they can do. Few of the comments or projects seem to reflect knowledge of how sketchy the “AI” results are. I’m with Kate Crawford; “Artificial Intelligence is neither.”

In webinar chats I share Princeton Professor Harry Frankfurt’s small book “On Bullshit.”

There are correct statements, there are knowingly false statements, and there is bullshit. His model works equally well for politics and LLMs.

I pretty much limit myself to using ChatGPT as an alternative to Stack Overflow (not least because search results Stack Overflow returned by web search engines at least are becoming increasingly less than useful for me – poor quality questions that seem to have SEO mojo, no answer or weak answer, out of date responses that had historical weight but not so useful now, etc).

But I’m increasingly anti-generative things for a whole range or reasons, not least because they’re a resource intensive way of doing things that can be done more flexibly, efficiently, effectively and, if their organisation allows it, humanely, by people who have taken the time and care to develop some sort of capacity — basic knowledge and skills, and some sort of care and pride and humane criticality in what they do — in the area in which they work.

A lot of the conversational AI and RAG stuff seems little to add over good search tools (which is something my org at least has never had any interest in). And I’d rather find out-of-copyright images from 19th century fairy tale collections discoverable in archive.org rather than use genAI image generator (and if I wanted to create my own I’d spend a couple of years learning how to draw or ask someone I know to draw me whatever and then pay them for it in some way).

Oh I am right with you on the doubt of AI being an alternative for applying critical eyes to search results. I wrote this just more as an observation that MY process of search, winnow, test, recast was not all that different.

And for images there’s a dull sameness to the ones most people produce from the generative image makers. I have seen some stunning exceptions, but imagine they take many rounds of reprompting. And just last night I had an image I needed to make much wider than original proportions (for a banner image) and have to admit was a bit impressed how Photoshop did generative fill to pull the background wider.

It’s a mixed bag, but I am firm that the act of doing things myself, a bit more hands on craft, is a more statisfying process than waiting for the microwave to ding