Even before a tl;dr (as if I do them anyhow), I am about as far from a web accessibility expert as my dog.

But I’ve taken a curious interest since summer 2018 when Twitter made a big deal of announcing the addition of options to add alternative text to tweeted images. Fire of a few PR statements, get the stories echoed to the pious outlets of EngadgetWiredTechCrunchETC, and call it done. Never mind that adding this to your images means turning ON an option 15th in a list of preferences.

In my own informal research (meaning I scroll through my timeline and count how many images tweeted have alt text) I’d say the activity rate tn doing this us about 0.05% of tweets. But it’s changed my approach to not just tweeting but also blogging, to try to up my game to as much as possible in not only using them, but also making them useful.

But not to be outdone, Instagram is in the game too, in their announcement of Creating a More Accessible Instagram, they proclaim:

We are introducing two new improvements to make it easier for people with visual impairments to use Instagram. With more than 285 million people in the world who have visual impairments, we know there are many people who could benefit from a more accessible Instagram.

Creating a More Accessible Instagram

I sure hoped they talked to a few of those 285 million people…

First, we’re introducing automatic alternative text so you can hear descriptions of photos through your screen reader when you use Feed, Explore and Profile. This feature uses object recognition technology to generate a description of photos for screen readers so you can hear a list of items that photos may contain as you browse the app.

same source!

I wonder how useful this really is to people who are visually impaired.

Imagine your IG experience as a robo voice reading below (if you think I made this up read Bored Panda’s Somebody Is Showing How Instagram Photos Are All Starting To Look The Same And It’s Pretty Freaky)

cat

back of hat head with outdoorsy hat

person standing on top of car in wild

cat

person centered rowing in canoe

plate of food

catNote that “first” in the Instagram announcement is the AI solution. Followed is poor John Henry, hammering by hand alt text one photo at a time:

Next, we’re introducing custom alternative text so you can add a richer description of your photos when you upload a photo. People using screen readers will be able to hear this description.

Yup, from Creating a More Accessible Instagram

I wanted to know the experience. It was a few days before my app updated with the feature.

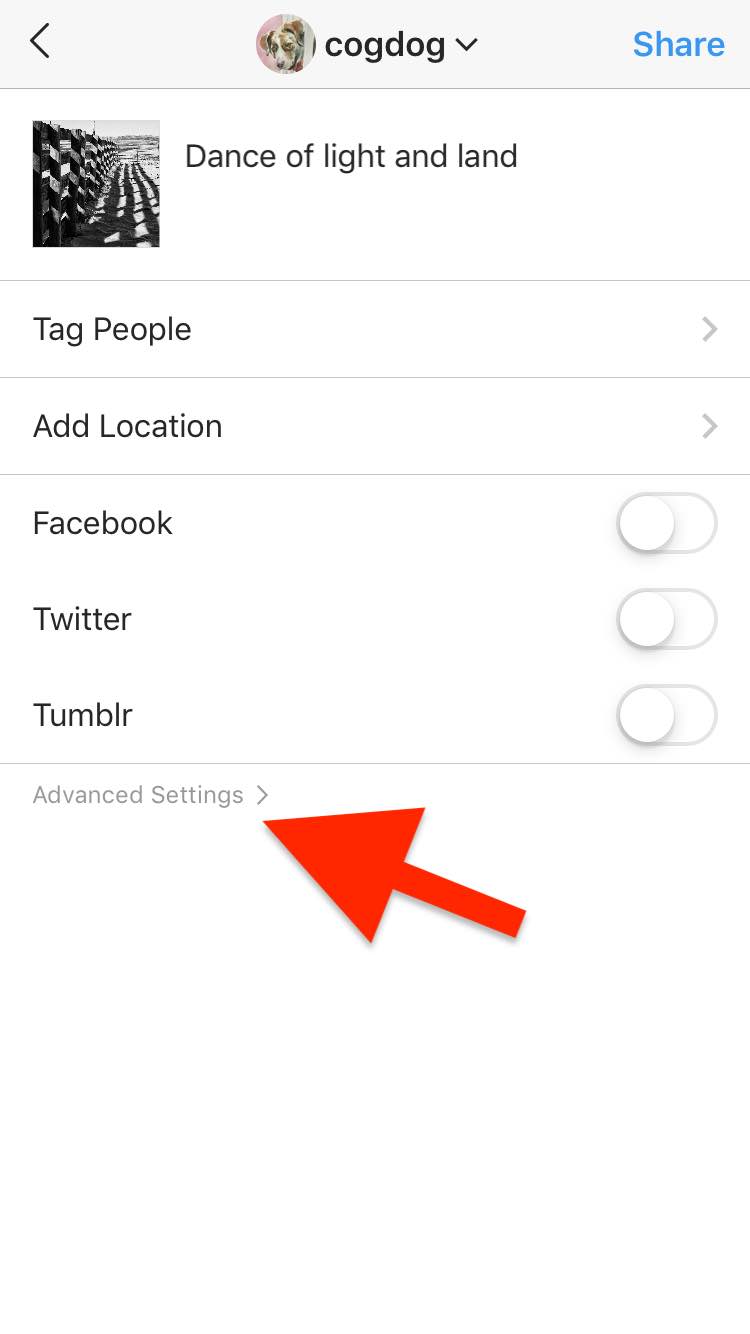

At least unlike Twitter, you do not have to choose to do the right thing and enable the alt text description field through some arcane option (and Instagram has like 70 difference preference settings). It’s there, very obvious before you click the Share button closest to the where the user’s eye end up.

another editing box is where I can add my alt text.

And here is the posted photo- as if you could tell there was an alt text!

But if you have resilience you can dig down deep in the source code (that is, if you actually can see web source code any more or care to look)

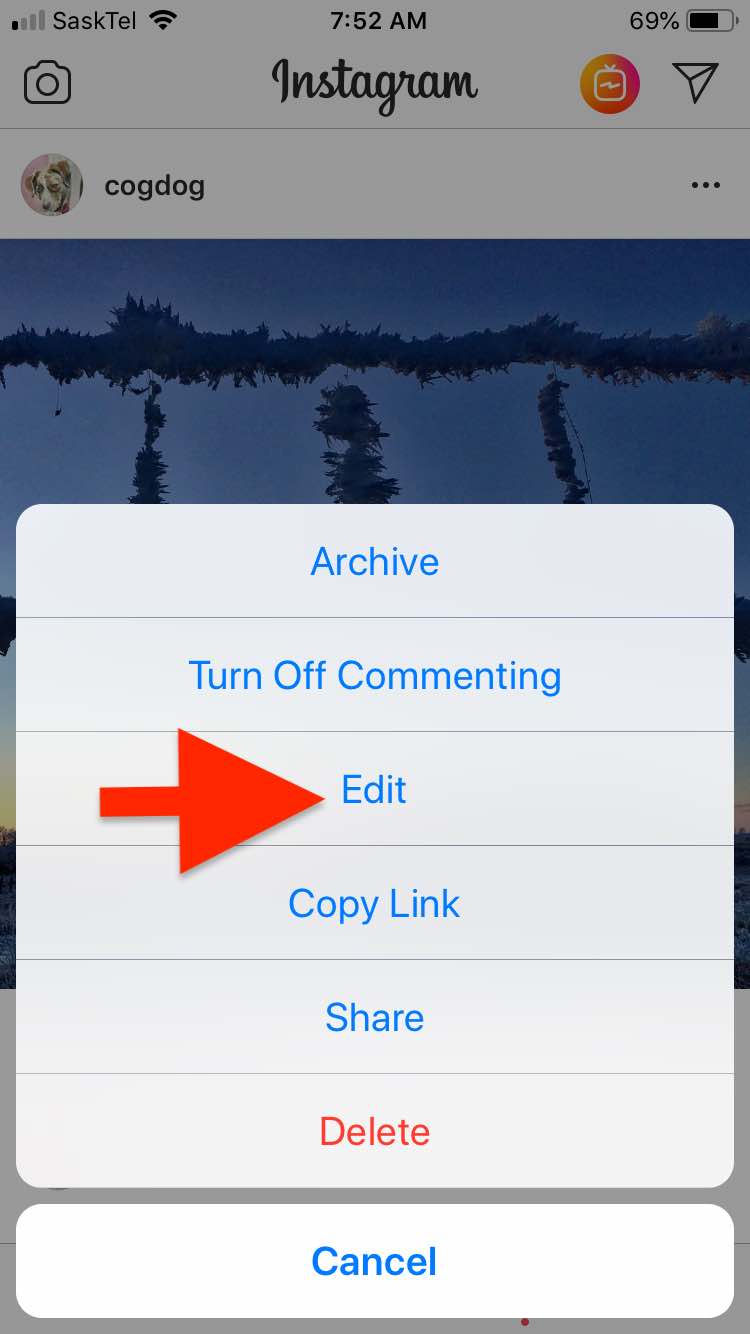

It’s almost easier to add alt text to an existing photo, just open the top right … menu on your own photo:

Then clicking the EDIT link in the menu

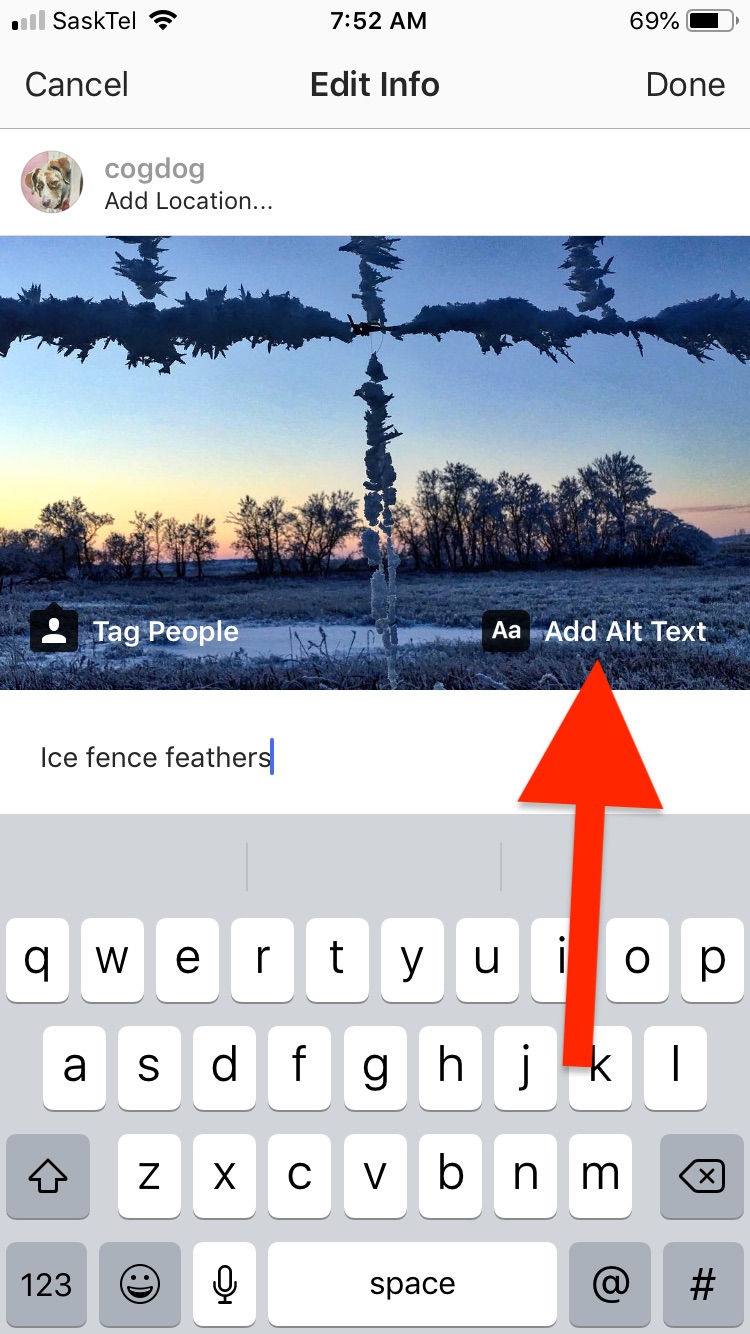

And here you can see a more “accessible” place and interface for adding Alt text!

I rummaged around a few of my photos, I see some of automated ones as

"No automatic alt text available"

This photo has one (again buried in source HTML)

Image may contain: tree, sky, outdoor and nature

How helpful is that?

Other times, Instagram seems to just insert my caption as an alt= text — which is not really always useful either. Easy to code, maybe…

But what is it like to someone to navigate Instagram this way? What good is it for me as a sighted critic and their sighted engineers to design an interface?

I tried a bit using Voiceover in my iOS device and nearly went bezerk trying to even get the app open, much less reading me the image alt tags. Just turning off the feature led to howling.

So I activated Voice Assist on my laptop, and spent about 10 minutes (mostly my own inexperience in navigating) trying to get to the image above with the alt text I added via the instagram app.

So, yes, in paper and in PR statements, Instagram has added accessibility features. Does that really make it accessible to people without vision? I cannot speak for them. Is it meaningful if it’s an obstacle course of interface for people to add alt text or do we rush on by and leave it to the AI.

To me that is the likely what they are after; anyone of us who adds alt text manually is working to verify their AI?

The best outcome for me in looking through this stuff is from Veronica with Four Eyes, someone really with a visual challenge, who writes to really helpful guides to accessibility (as opposed to PR statements), like

Because accessibility is much more than adding a few features….

Featured Image: Did some re-writing at the top of an image of the Empress of Britain a public domain image from the Library of Congress. This is my modified version:

I totally agree and share the same frustration! I enjoyed reading this article, thank you!